How to Use LoRA in Stable Diffusion? A Step-by-Step Tutorial 2023

How to Use LoRA in Stable Diffusion? A Step-by-Step Tutorial

LoRA is a feature that allows you to use latent codes to control the attributes and styles of the images that you generate with Stable Diffusion. LoRA stands for Latent Optimization for Representation Alignment, and it is a technique that can manipulate the latent space of a generative model to achieve desired effects. In this tutorial, we will show you how to use LoRA in Stable Diffusion, a web-based interface that lets you create amazing images from text prompts using advanced AI models.

Understanding LoRA

LoRA works by finding the optimal latent codes that match the text prompts that you provide. A latent code is a vector of numbers that represents the features and characteristics of an image. By changing the latent codes, you can change the appearance and style of the image. LoRA can find the latent codes that best align with your text prompts, and use them to generate images that match your expectations.

Sourcing LoRA models for Stable Diffusion

To use LoRA in Stable Diffusion, you need to have LoRA models that are compatible with the generative models that Stable Diffusion uses. LoRA models are trained on specific datasets and domains, such as anime, portraits, or landscapes. You can find LoRA models from various sources, such as:

- The official LoRA repository, which provides LoRA models for anime, portraits, and landscapes, as well as instructions on how to use them.

- The LoRA Discord server, which is a community of LoRA users and developers, where you can find LoRA models for various domains and genres, as well as get support and feedback.

- The Stable Diffusion Discord server, which is a community of Stable Diffusion users and developers, where you can find LoRA models that are compatible with Stable Diffusion, as well as get support and feedback.

What are the advantages of LoRA Stable Diffusion models?

LoRA Stable Diffusion models offer several key advantages:

- Efficiency: LoRA models are significantly smaller and quicker to train compared to fully fine-tuned Stable Diffusion models. This enhances their accessibility to a broader range of users and devices.

- Flexibility: LoRA models can be effectively employed to fine-tune Stable Diffusion models for a wide array of tasks, including text-to-image generation, image editing, and style transfer.

- Control: Users benefit from enhanced control over the generation process with LoRA models, enabling them to fine-tune the model’s output to their specific requirements.

- Stability: LoRA models tend to be more stable than fully fine-tuned Stable Diffusion models, reducing the likelihood of generating artifacts or other errors.

Moreover, LoRA Stable Diffusion models present specific advantages, such as:

- Smaller File Sizes: LoRA models are considerably more compact than fully fine-tuned Stable Diffusion models, simplifying storage and sharing.

- Faster Inference: They also exhibit faster execution compared to fully fine-tuned Stable Diffusion models, making them well-suited for real-time applications.

- Improved Performance on Specific Tasks: LoRA models have demonstrated superior performance on particular tasks like text-to-image generation and style transfer.

How to use LoRA models with Automatic1111’s Stable Diffusion Web-UI?

To use LoRA models with Automatic1111’s Stable Diffusion Web-UI, which is a web-based interface that lets you create images from text prompts using Stable Diffusion, you need to follow these steps:

Step 1: Installing LoRA models

To install LoRA models, you need to download the LoRA model files from the source that you have chosen, and save them in a folder on your computer. The LoRA model files are usually in the format of .pt or .pth, and they have names that indicate the domain and the type of the LoRA model, such as anime_character.pt or portrait_style.pt.

Step 2: Accessing LoRA models

To access LoRA models, you need to open the Stable Diffusion Web-UI in your browser, and click on the Settings button at the top right corner. This will open a menu where you can change the settings and parameters of Stable Diffusion. In the menu, you will see a section called LoRA, where you can upload and manage your LoRA models. To upload a LoRA model, you need to click on the Upload button, and select the LoRA model file from your computer. You can upload multiple LoRA models at once, and you can also delete or rename them as you wish.

Step 3: Activating LoRA models

To activate LoRA models, you need to select the LoRA model that you want to use from the drop-down menu in the LoRA section. You can also adjust the LoRA strength, which is a value that controls how much the LoRA model affects the image generation. The LoRA strength can range from 0 to 100, where 0 means no effect, and 100 means full effect. You can also use negative values to invert the effect of the LoRA model. For example, if you use a LoRA model that makes the image more colorful, and you use a negative LoRA strength, the image will become less colorful.

Step 4: Generating images

To generate images, you need to type your text prompt in the text box at the top of the Stable Diffusion Web-UI, and click on the Generate button. This will tell the Stable Diffusion Web-UI to use the LoRA model and the LoRA strength that you have selected, along with the other settings and parameters that you have chosen, to generate four variations of your image. You can then choose which variation you like best, and click on the Save button to save it to your computer. You can also use the V and U buttons to generate more variations and upscale your image.

Considerations when using LoRA in Stable Diffusion

When using LoRA in Stable Diffusion, there are some things that you need to consider, such as:

- LoRA models are not universal, and they may not work well with every text prompt or every generative model. You need to experiment with different LoRA models and different text prompts to find the best combination for your desired image.

- LoRA models may have some limitations or biases, depending on how they were trained and what data they were trained on. For example, some LoRA models may not be able to handle complex or rare concepts, or they may favor certain attributes or styles over others. You need to be aware of these limitations and biases, and use LoRA models with caution and respect.

- LoRA models may have some side effects or trade-offs, depending on how they affect the latent space of the generative model. For example, some LoRA models may improve the quality and diversity of the images, but they may also reduce the resolution and fidelity of the images. You need to balance the pros and cons of using LoRA models, and adjust the LoRA strength accordingly.

Types of LoRA models in Stable Diffusion

There are different types of LoRA models that you can use in Stable Diffusion, depending on what aspect of the image you want to control. Here are some of the common types of LoRA models that you can find:

1. Character LoRA

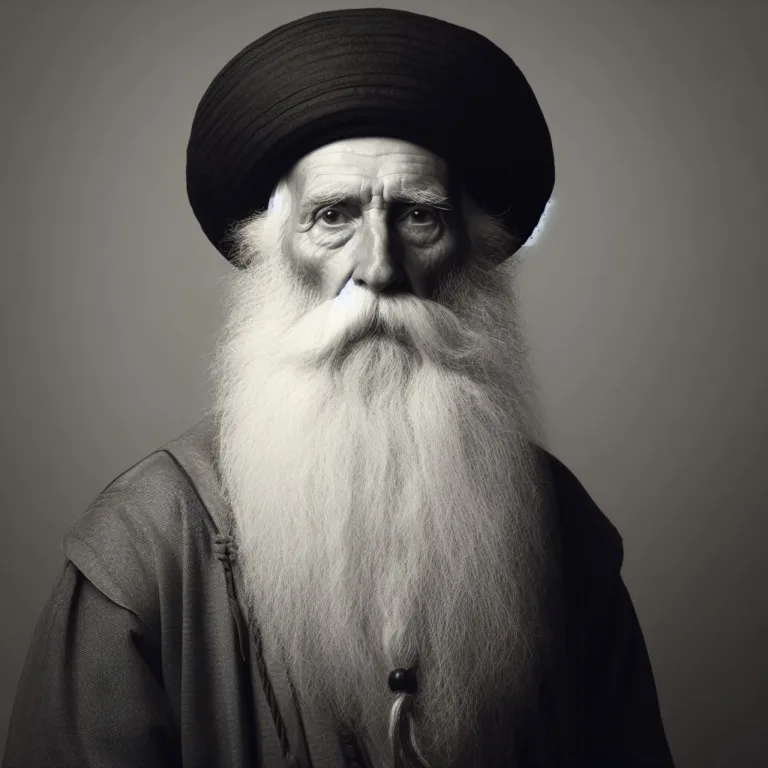

Character LoRA models are LoRA models that can control the attributes and features of the characters in the image, such as their appearance, personality, or emotion. For example, you can use a character LoRA model to change the hair color, eye color, or facial expression of the character. Character LoRA models are usually trained on datasets that contain images of characters, such as anime, portraits, or cartoons.

2. Style LoRA

Style LoRA models are LoRA models that can control the style and aesthetic of the image, such as the color scheme, the lighting, or the texture. For example, you can use a style LoRA model to change the mood, the tone, or the genre of the image. Style LoRA models are usually trained on datasets that contain images of different styles, such as paintings, drawings, or photographs.

3. Concept LoRA

Concept LoRA models are LoRA models that can control the concept and theme of the image, such as the topic, the setting, or the story. For example, you can use a concept LoRA model to change the subject, the background, or the plot of the image. Concept LoRA models are usually trained on datasets that contain images of different concepts, such as landscapes, objects, or scenes.

4. Pose LoRA

Pose LoRA models are LoRA models that can control the pose and posture of the characters in the image, such as their position, orientation, or movement. For example, you can use a pose LoRA model to change the angle, the direction, or the action of the character. Pose LoRA models are usually trained on datasets that contain images of different poses, such as humans, animals, or chibis.

5. Clothing LoRA

Clothing LoRA models are LoRA models that can control the clothing and accessories of the characters in the image, such as their outfit, their style, or their function. For example, you can use a clothing LoRA model to change the color, the pattern, or the type of the clothing. Clothing LoRA models are usually trained on datasets that contain images of different clothing, such as fashion, costumes, or uniforms.

6. Object LoRA

Object LoRA models are LoRA models that can control the objects and items in the image, such as their shape, their size, or their function. For example, you can use an object LoRA model to change the number, the location, or the category of the objects. Object LoRA models are usually trained on datasets that contain images of different objects, such as furniture, tools, or toys.

Which Stable Diffusion Model is the Best?

Determining the “best” stable diffusion model is a matter of subjectivity and hinges on a variety of factors, including specific requirements, intended outcomes, and available resources.

Several stable diffusion models have been introduced in the literature, such as DeepArt, DeepDream, and StyleGAN. Each of these models possesses its unique strengths and weaknesses, and the selection of the ideal model can be contingent on the particular application domains and underlying datasets.

Therefore, it is advisable to experiment with different Stable Diffusion models to discern which one aligns most closely with your requirements and achieves the desired results. Additionally, taking into account the experiences and feedback of other users can be beneficial in making an informed decision.

In the end, the “best” Stable Diffusion model may ultimately depend on what works best for you personally and the type of results you aim to attain.

Conclusion of How to Use LoRA in Stable Diffusion?

In this tutorial, we have learned how to use LoRA in Stable Diffusion, a web-based interface that lets you create amazing images from text prompts using advanced AI models. We have learned that:

- LoRA is a feature that allows you to use latent codes to control the attributes and styles of the images that you generate with Stable Diffusion.

- LoRA works by finding the optimal latent codes that match the text prompts that you provide.

- To use LoRA in Stable Diffusion, you need to have LoRA models that are compatible with the generative models that Stable Diffusion uses.

- To use LoRA models with Automatic1111’s Stable Diffusion Web-UI, you need to install, access, activate, and generate images with LoRA models.

- When using LoRA in Stable Diffusion, you need to consider some things, such as the compatibility, the limitations, the biases, and the trade-offs of LoRA models.

- There are different types of LoRA models that you can use in Stable Diffusion, depending on what aspect of the image you want to control, such as character, style, concept, pose, clothing, or object.

A Simpler Alternative to LoRA Stable Diffusion: ImageFlash!

While LoRA Stable Diffusion has undeniably transformed the realm of AI imaging, there’s another noteworthy alternative that deserves your attention: ImageFlash. ImageFlash offers an enticing solution for those eager to explore fresh avenues in artistic expression and image generation.

What truly distinguishes ImageFlash from its competitors is its extensive array of features. For instance, ImageFlash takes the worry out of enhancing your prompts. Neuroflash assists you in guiding the AI by intelligently refining your written prompts with our magic pen. And for specific styles, you have a range of tweaking options at your disposal:

- Realistic Images: If you’re looking to incorporate photos into your visual content without breaking the bank.

- Product Presentation: Craft realistic product images within seconds, elevating your marketing strategy.

- Stock Photography: Exclusive, royalty-free stock photos tailored to your precise requirements.

- Illustrations: Elevate the vision and concept of your products.

- Graphics: In the marketing industry, using graphics is a powerful means of communication. With ImageFlash, the image AI generator, you can streamline the process and align it with your objectives.

Exclusively available to Pro plan users and above, our advanced design tools empower you to effortlessly customize the dimensions of your creations.

These features are ideal for those who are deeply committed to their craft and demand nothing less than excellence when producing visually stunning content. With our user-friendly interface and robust editing capabilities, you can swiftly generate everything from simple logos to intricate illustrations. Plus, we’re constantly rolling out updates and introducing new features, ensuring there’s always something fresh to explore.

FAQ:How to Use LoRA in Stable Diffusion?

Q1: What is LoRA, and how does it complement Stable Diffusion?

A1: LoRA, or Low-Rank Adaptation, is a technique that fine-tunes Stable Diffusion models for specific styles or concepts. It achieves this by adjusting the model’s cross-attention layers where textual prompts and image generation converge. Stable Diffusion, on the other hand, is the overarching text-to-image model that serves as LoRA’s foundation.

Q2: What are the fundamental steps to effectively utilize LoRA models?

A2: To harness the power of LoRA models, follow these steps:

Install your desired LoRA model by placing its files in the models/Lora folder of the Stable Diffusion web interface.

Integrate the LoRA model into your textual prompt using the <lora: name: weight> format.

Generate the image as you typically would, while considering the specific requirements of your chosen LoRA model.

Q3: Can I combine multiple LoRA models to achieve unique image effects?

A3: Yes, LoRA models can be combined to create distinct blends of styles and effects in your generated images. Additionally, they can be used alongside embeddings to unlock even more creative possibilities.

Q4: Are there any important considerations for working with LoRA models?

A4: When working with LoRA models, keep the following in mind: Adjust the weight or multiplier in the <lora> keyphrase to control the LoRA model’s influence on the output.

Some LoRA models may require specific trigger words mentioned in their descriptions to activate certain concepts. Ensure compatibility by matching LoRAs with the appropriate Stable Diffusion model versions for the best results. Explore recommendations from creators often included in LoRA model descriptions for valuable insights.

Q5: How do I source LoRA models for Stable Diffusion effectively?

A5: LoRA models can be found in various places, with Civitai and HuggingFace being popular sources. Civitai offers a user-friendly interface with a vast collection of LoRA models. In contrast, HuggingFace provides variety but categorizes LoRA models alongside checkpoint models.

Q6: What types of LoRA models are available for Stable Diffusion?

A6: LoRA models come in several types, including: Character LoRAs for recreating specific characters. Style LoRAs for adopting particular artistic styles.

Concept LoRAs focused on specific ideas or emotions. Pose LoRAs for altering character poses. Clothing LoRAs for changing attire. Object LoRAs for generating various objects.

Q7: How can LoRA models enhance image quality and style variations?

A7: LoRA models, like “epinoiseoffset” and “Detail Tweaker,” can enhance image quality and adjust levels of detail, offering versatile creative possibilities.

Q8: Can you provide examples of LoRA styles or aesthetics available in Stable Diffusion?

A8: Certainly! Styles/aesthetics offered by LoRA models include “Colorwater” for a watercolor style, “Anime lineart” for lineart/colorbook style, and “Anime tarot card art style” for intricate tarot card illustrations, among others.

Q9:How do I add LoRA to prompt?

To add LoRA to a prompt in AUTOMATIC1111, follow these steps:

- Click the Additional Networks icon under the Generate button.

- Switch to the LoRA tab.

- Click on the LoRA model you want to use.

- The LoRA model will be inserted into your prompt, along with its trigger words.

- You can adjust the weight of the LoRA model by changing the number next to it.

For example, to add the GI Landscape 2 LoRA model to your prompt, you would:

- Click the Additional Networks icon.

- Switch to the LoRA tab.

- Click on GI Landscape 2.

- The following text will be inserted into your prompt:

<lora:GI Landscape 2:1.0>

You can then adjust the weight of the LoRA model as needed.

Here is an example of a prompt that uses LoRA:

<lora:GI Landscape 2:1.0>A beautiful landscape painting in the style of Genshin Impact.

This prompt will generate an image of a landscape in the style of Genshin Impact, with the GI Landscape 2 LoRA model applied to it.

Note that you can also add LoRA models to your prompt manually by typing the following syntax:

<lora:model_name:weight>

For example, to add the GI Landscape 2 LoRA model with a weight of 0.5, you would type the following:

<lora:GI Landscape 2:0.5>

You can also use multiple LoRA models in the same prompt. For example, the following prompt uses two LoRA models, GI Landscape 2 and Anime Style:

<lora:GI Landscape 2:1.0><lora:Anime Style:0.5>A beautiful anime-style landscape painting.

This prompt will generate an image of a landscape in the style of anime, with the GI Landscape 2 and Anime Style LoRA models applied to it.

Q10:What does LoRA mean in Stable Diffusion?

LoRA in Stable Diffusion stands for Low-Rank Adaptation. It is a training technique that allows you to fine-tune Stable Diffusion models to specific concepts, such as art styles, characters, or themes. LoRA models are much smaller than standard Stable Diffusion models, making them easier to train and deploy.

To use LoRA in Stable Diffusion, you can either fine-tune a LoRA model yourself or use a pre-trained LoRA model from a third party. Once you have a LoRA model, you can apply it to any Stable Diffusion model to generate images in the desired style.

Here are some of the benefits of using LoRA in Stable Diffusion:

- Smaller file sizes: LoRA models are much smaller than standard Stable Diffusion models, making them easier to train and deploy.

- Faster training: LoRA models can be trained much faster than standard Stable Diffusion models.

- More control: LoRA models give you more control over the style of the generated images.

Q11:How to generate LoRA?

Use LoRA’s prediction model to generate new images with your trained concept.

- Step 1: Gather training images. …

- Step 2: Upload training images. …

- Step 3: Train your concept. …

- Step 4: Save the URL of your trained output. …

- Step 5: Generate images.

Q12:How to install LoRA library?

Using the Arduino IDE Library Manager

- Choose Sketch -> Include Library -> Manage Libraries…

- Type LoRa into the search box.

- Click the row to select the library.

- Click the Install button to install the library.

2 Comments